Real Lighting

No Lighting

GLEAM

Introduction

Mixed reality apps on mobile devices try to blend virtual objects with the real world in a natural way. To make this look realistic, the lighting on virtual objects needs to match the lighting in the physical environment. To solve this, we created GLEAM, a system that estimates real-world lighting in real time by analyzing reflections on objects and working with existing mobile AR tools. GLEAM can also connect multiple devices to capture lighting from different angles, making the virtual scene look even more realistic.

This system gives AR developers more flexibility in using materials that rely on accurate lighting, like glass, water, or metal – things that usually don’t look right in AR. In tests with 30 users, GLEAM outperformed standard methods like ARKit’s lighting tools. It can adapt to different situations: updating lighting very quickly (in 30ms) for fast-moving scenes, or more slowly (up to 400ms) when high-quality lighting is needed. This helps balance speed and visual quality depending on the needs of the scene.

- Support complex materials in AR: Expand the range of materials AR developers can use that look believable such as glass, liquids, and metals.

- Scalable to multiple devices: Designed to work with multiple devices to work with capturing environmental lighting at different angles.

Method Overview

GLEAM observes reflective objects captured in the camera of a device to gather illumination samples from the scene using three modules: (a) Radiance Sampling, (b) Optional Network Transfer, and (c) Cubemap Composition.

(a) Radiance Sampling

AR systems understand the positioning of the camera with respect to a scene. Through the attachment of fiducial markers, reflective objects can be tracked. We design the GLEAM system to use the geometry of the camera’s position, the geometry of the reflective object’s position, and the pixels in the camera frame to sample the radiance of the environment.

To do so, GLEAM iterates over each camera frame pixel, projecting a virtual ray from the AR camera in the scene. GLEAM bounces the ray off of the 3D mesh of the target specular object to understand the angle of the incoming light. GLEAM uses the pixel value to estimate radiance. The angle and radiance value together constitute a radiance sample.

(b) Optional Network Transfer

GLEAM works with a single viewpoint for a single user. But for richer estimation, multiple devices can capture and share radiance samples from multiple perspectives.Our system presents an opportunity to improve the estimation through collaborative sensing.

(c) Cubemap Composition

GLEAM interpolates the radiance samples into a cubemap, representing the physical lighting from all directions. Any gaps in the cubemap are filled through nearest-neighbor and inverse-distance-weighting algorithms. This cubemap is used to virtually illuminate the scene, imparting realism to virtual objects.

System Characterization

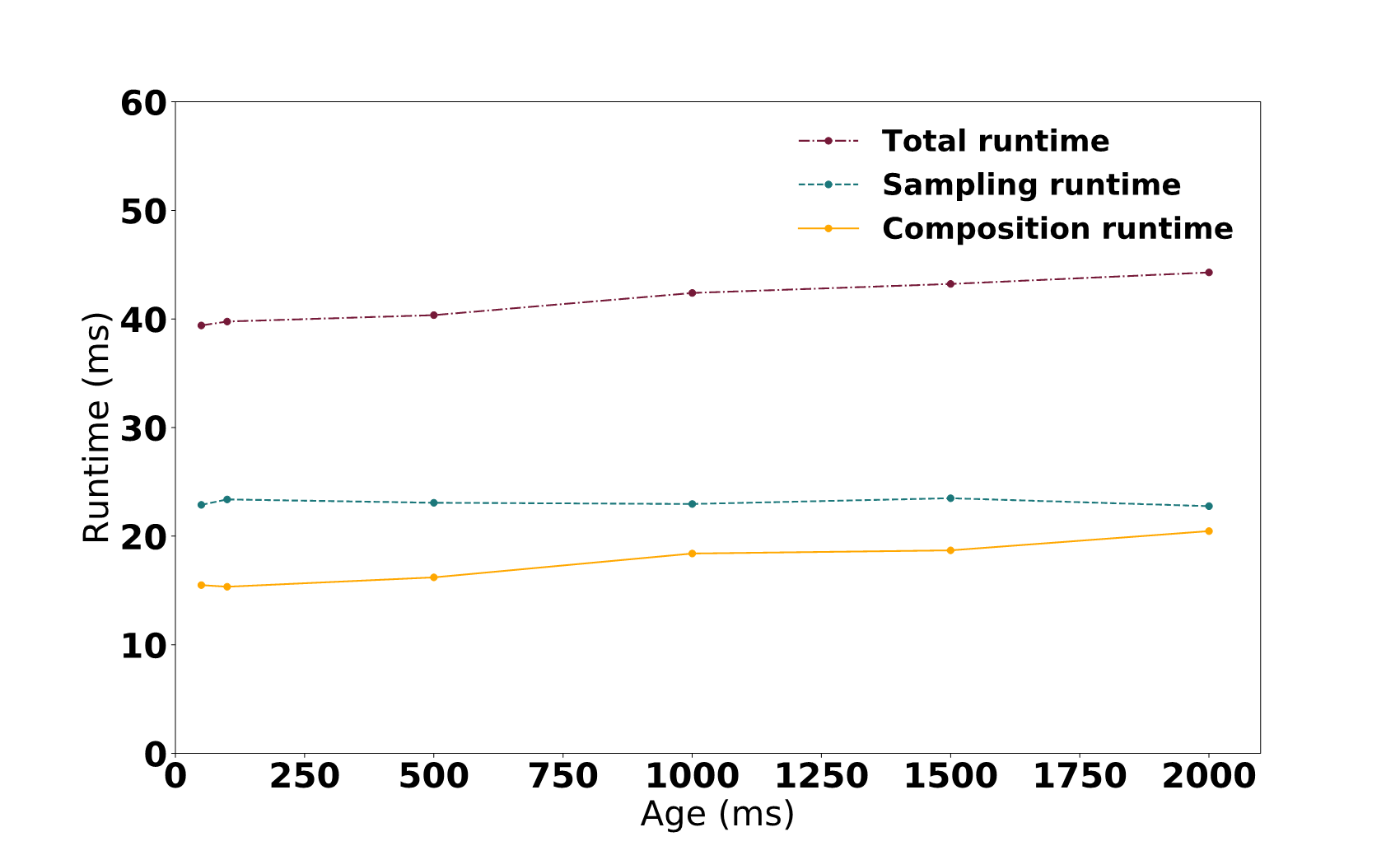

Characterizing runtime performance of GLEAM single-viewpoint prototype reveals the limitation of generating a high number of samples and higher resolution cubemaps at high update rates. These limitations provide opportunity for GLEAM to optimize between quality (resolution and fidelity) or speed (adaptiveness).